編輯:關於Android編程

本文均屬自己閱讀源碼的點滴總結,轉賬請注明出處謝謝。

歡迎和大家交流。qq:1037701636 email:gzzaigcn2012@gmail.com

Android源碼版本Version:4.2.2; 硬件平台 全志A31

step1:之前在講到CameraService處的setPreviewWindow中傳入一個窗口給HAL

status_t setPreviewWindow(const sp& buf)

{

ALOGV("%s(%s) buf %p", __FUNCTION__, mName.string(), buf.get());

if (mDevice->ops->set_preview_window) {

mPreviewWindow = buf;

mHalPreviewWindow.user = this;

ALOGV("%s &mHalPreviewWindow %p mHalPreviewWindow.user %p", __FUNCTION__,

&mHalPreviewWindow, mHalPreviewWindow.user);

return mDevice->ops->set_preview_window(mDevice,

buf.get() ? &mHalPreviewWindow.nw : 0);//調用底層硬件hal接口

}

return INVALID_OPERATION;

}傳入給HAL的參數buf為一個Surface,還有一個變量是關於預覽窗口的數據流操作nw

struct camera_preview_window {

struct preview_stream_ops nw;

void *user;

};

該變量的初始如下:這些函數接口看上去很熟悉,的確在SurfaceFlinger中,客戶端的Surface也就是通過這些接口來向SurfaceFlinger申請圖形緩存並處理圖形緩存顯示的,只是之前的操作都交個了OpenGL ES的eglswapbuf()來對這個本地窗口進行如下的dequeueBuffer和enqueuebuffer的操作而已。而在Camera的預覽中,這些操作將手動完成。

void initHalPreviewWindow()

{

mHalPreviewWindow.nw.cancel_buffer = __cancel_buffer;

mHalPreviewWindow.nw.lock_buffer = __lock_buffer;

mHalPreviewWindow.nw.dequeue_buffer = __dequeue_buffer;

mHalPreviewWindow.nw.enqueue_buffer = __enqueue_buffer;

mHalPreviewWindow.nw.set_buffer_count = __set_buffer_count;

mHalPreviewWindow.nw.set_buffers_geometry = __set_buffers_geometry;

mHalPreviewWindow.nw.set_crop = __set_crop;

mHalPreviewWindow.nw.set_timestamp = __set_timestamp;

mHalPreviewWindow.nw.set_usage = __set_usage;

mHalPreviewWindow.nw.set_swap_interval = __set_swap_interval;

mHalPreviewWindow.nw.get_min_undequeued_buffer_count =

__get_min_undequeued_buffer_count;

}

step2.繼續前面的preview的處理操作,在CameraService處的CameraClinet已經調用了CameraHardwareInterface的startPreview函數,實際就是操作HAL處的Camera設備如下

status_t startPreview()

{

ALOGV("%s(%s)", __FUNCTION__, mName.string());

if (mDevice->ops->start_preview)

return mDevice->ops->start_preview(mDevice);

return INVALID_OPERATION;

}

step3.進入HAL來看Preview的處理

status_t CameraHardware::doStartPreview(){

...........

res = camera_dev->startDevice(mCaptureWidth, mCaptureHeight, org_fmt, video_hint);//啟動設備

......

}調用V4L2設備來啟動視頻流的采集,startDevice()函數更好的解釋了預覽的啟動也就是視頻采集的啟動。

status_t V4L2CameraDevice::startDevice(int width,

int height,

uint32_t pix_fmt,

bool video_hint)

{

LOGD("%s, wxh: %dx%d, fmt: %d", __FUNCTION__, width, height, pix_fmt);

Mutex::Autolock locker(&mObjectLock);

if (!isConnected())

{

LOGE("%s: camera device is not connected.", __FUNCTION__);

return EINVAL;

}

if (isStarted())

{

LOGE("%s: camera device is already started.", __FUNCTION__);

return EINVAL;

}

// VE encoder need this format

mVideoFormat = pix_fmt;

mCurrentV4l2buf = NULL;

mVideoHint = video_hint;

mCanBeDisconnected = false;

// set capture mode and fps

// CHECK_NO_ERROR(v4l2setCaptureParams()); // do not check this error

v4l2setCaptureParams();

// set v4l2 device parameters, it maybe change the value of mFrameWidth and mFrameHeight.

CHECK_NO_ERROR(v4l2SetVideoParams(width, height, pix_fmt));

// v4l2 request buffers

int buf_cnt = (mTakePictureState == TAKE_PICTURE_NORMAL) ? 1 : NB_BUFFER;

CHECK_NO_ERROR(v4l2ReqBufs(&buf_cnt));//buf申請

mBufferCnt = buf_cnt;

// v4l2 query buffers

CHECK_NO_ERROR(v4l2QueryBuf());//buffer的query,完成mmap等操作

// stream on the v4l2 device

CHECK_NO_ERROR(v4l2StartStreaming());//啟動視頻流采集

mCameraDeviceState = STATE_STARTED;

mContinuousPictureAfter = 1000000 / 10;

mFaceDectectAfter = 1000000 / 15;

mPreviewAfter = 1000000 / 24;

return NO_ERROR;

}這個是完全參考了V4L2的視頻采集處理流程:

1.v4l2setCaptureParams()設置采集的相關參數;

2.v4l2QueryBuf():獲取內核圖像緩存的信息,並將所有的內核圖像緩存映射到當前的進程中來。方便用戶空間的處理

3.v4l2StartStreaming():開啟V4L2的視頻采集流程。

step4: 圖像采集線程bool V4L2CameraDevice::captureThread();

該函數的內容比較復雜,但核心是 ret = getPreviewFrame(&buf),獲取當前一幀圖像:

int V4L2CameraDevice::getPreviewFrame(v4l2_buffer *buf)

{

int ret = UNKNOWN_ERROR;

buf->type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf->memory = V4L2_MEMORY_MMAP;

ret = ioctl(mCameraFd, VIDIOC_DQBUF, buf); //獲取一幀數據

if (ret < 0)

{

LOGW("GetPreviewFrame: VIDIOC_DQBUF Failed, %s", strerror(errno));

return __LINE__; // can not return false

}

return OK;

}

調用了典型的VIDIOC_DQBUF命令,出列一幀圖形緩存,提取到用戶空間供顯示。

當前的平台通過定義一個V4L2BUF_t結構體來表示當前采集到的一幀圖像,分別記錄到Y和C所在的物理地址和用戶空間的虛擬地址。虛擬地址是對內核采集緩存的映射

typedef struct V4L2BUF_t

{

unsigned int addrPhyY; // physical Y address of this frame

unsigned int addrPhyC; // physical Y address of this frame

unsigned int addrVirY; // virtual Y address of this frame

unsigned int addrVirC; // virtual Y address of this frame

unsigned int width;

unsigned int height;

int index; // DQUE id number

long long timeStamp; // time stamp of this frame

RECT_t crop_rect;

int format;

void* overlay_info;

// thumb

unsigned char isThumbAvailable;

unsigned char thumbUsedForPreview;

unsigned char thumbUsedForPhoto;

unsigned char thumbUsedForVideo;

unsigned int thumbAddrPhyY; // physical Y address of thumb buffer

unsigned int thumbAddrVirY; // virtual Y address of thumb buffer

unsigned int thumbWidth;

unsigned int thumbHeight;

RECT_t thumb_crop_rect;

int thumbFormat;

int refCnt; // used for releasing this frame

unsigned int bytesused; // used by compressed source

}V4L2BUF_t;

來看看該結構體的初始化代碼:

V4L2BUF_t v4l2_buf;

if (mVideoFormat != V4L2_PIX_FMT_YUYV

&& mCaptureFormat == V4L2_PIX_FMT_YUYV)

{

v4l2_buf.addrPhyY = mVideoBuffer.buf_phy_addr[buf.index];

v4l2_buf.addrVirY = mVideoBuffer.buf_vir_addr[buf.index];

}

else

{

v4l2_buf.addrPhyY = buf.m.offset & 0x0fffffff;//內核物理地址

v4l2_buf.addrVirY = (unsigned int)mMapMem.mem[buf.index];//虛擬地址

}

v4l2_buf.index = buf.index;//內部采集緩存的索引

v4l2_buf.timeStamp = mCurFrameTimestamp;

v4l2_buf.width = mFrameWidth;

v4l2_buf.height = mFrameHeight;

v4l2_buf.crop_rect.left = mRectCrop.left;

v4l2_buf.crop_rect.top = mRectCrop.top;

v4l2_buf.crop_rect.width = mRectCrop.right - mRectCrop.left + 1;

v4l2_buf.crop_rect.height = mRectCrop.bottom - mRectCrop.top + 1;

v4l2_buf.format = mVideoFormat;

addrPhy和addrViry分別記錄到Y和C所在的物理地址和用戶空間的虛擬地址。而這個地址都是通過當前Buf的index直接設置的,為什麼?因為內核的圖像緩存區的mmap操作將每一個緩存,以其Index分別逐一的映射到了用戶空間,並記錄緩存的物理和虛擬地址,而這主要是方便後續圖像的顯示而已。

step5:bool V4L2CameraDevice::previewThread()//預覽線程

獲得了一幀數據必須通知預覽線程進行圖像的顯示,采集線程和顯示線程之間通過pthread_cond_wait(&mPreviewCond, &mPreviewMutex);進程間鎖進行等待。

bool V4L2CameraDevice::previewThread()//預覽線程

{

V4L2BUF_t * pbuf = (V4L2BUF_t *)OSAL_Dequeue(&mQueueBufferPreview);//獲取預覽幀buffer信息

if (pbuf == NULL)

{

// LOGV("picture queue no buffer, sleep...");

pthread_mutex_lock(&mPreviewMutex);

pthread_cond_wait(&mPreviewCond, &mPreviewMutex);//等待

pthread_mutex_unlock(&mPreviewMutex);

return true;

}

Mutex::Autolock locker(&mObjectLock);

if (mMapMem.mem[pbuf->index] == NULL

|| pbuf->addrPhyY == 0)

{

LOGV("preview buffer have been released...");

return true;

}

// callback

mCallbackNotifier->onNextFrameAvailable((void*)pbuf, mUseHwEncoder);//回調采集到幀數據

// preview

if (isPreviewTime())//預覽

{

mPreviewWindow->onNextFrameAvailable((void*)pbuf);//幀可以顯示

}

// LOGD("preview id : %d", pbuf->index);

releasePreviewFrame(pbuf->index);

return true;

}預覽線程主要做了兩件事,一是完成圖像緩存數據的回調供最最上層的使用;另一件當然是送顯。

step6:預覽線程如何顯示?

bool PreviewWindow::onNextFrameAvailable(const void* frame)//使用本地窗口surface 進行初始化

{

int res;

Mutex::Autolock locker(&mObjectLock);

V4L2BUF_t * pv4l2_buf = (V4L2BUF_t *)frame;//一幀圖像所在的地址信息

......

res = mPreviewWindow->set_buffers_geometry(mPreviewWindow,

mPreviewFrameWidth,

mPreviewFrameHeight,

format);//設置本地窗口的buffer的幾何熟悉

......

res = mPreviewWindow->dequeue_buffer(mPreviewWindow, &buffer, &stride);//申請SF進行bufferqueue的圖形緩存操作。返回當前進程地址到buffer

..................

res = grbuffer_mapper.lock(*buffer, GRALLOC_USAGE_SW_WRITE_OFTEN, rect, &img);//把映射回來的buffer信息中的地址放到img中,用來填充

.............

mPreviewWindow->enqueue_buffer(mPreviewWindow, buffer);//交由surfaceFlinger去做顯示

............

}上述代碼實時了本地窗口圖像向SurfaceFlinger的投遞,為何這麼說,看下面的分析:

1.PreviewWindow類裡的mPreviewWindow成員變量是什麼?

這個是從應用端的setPreviewDisplay()設置過來的,傳入到HAL的地方在CameraHardwareInterface的initialize函數裡:

return mDevice->ops->set_preview_window(mDevice,

buf.get() ? &mHalPreviewWindow.nw : 0);//調用底層硬件hal接口

}nw的操作在step1裡面已經有說明了,初始化相關的一些操作。

2.以dequeue_buffer為例:

static int __dequeue_buffer(struct preview_stream_ops* w,

buffer_handle_t** buffer, int *stride)

{

int rc;

ANativeWindow *a = anw(w);

ANativeWindowBuffer* anb;

rc = native_window_dequeue_buffer_and_wait(a, &anb);

if (!rc) {

*buffer = &anb->handle;

*stride = anb->stride;

}

return rc;

}調用到本地的窗口,通過w獲得ANativeWindow對象,來看看該宏的實現:

static ANativeWindow *__to_anw(void *user)

{

CameraHardwareInterface *__this =

reinterpret_cast(user);

return __this->mPreviewWindow.get();

}

#define anw(n) __to_anw(((struct camera_preview_window *)n)->user)

首先獲取user對象為CameraHardwareInterface對象,通過它獲得之前初始化的Surface對象即成員變量mPreviewWindow(屬於本地窗口ANativeWindow類)。

3.本地窗口的操作

static inline int native_window_dequeue_buffer_and_wait(ANativeWindow *anw,

struct ANativeWindowBuffer** anb) {

return anw->dequeueBuffer_DEPRECATED(anw, anb);

}

上述的過程其實是調用應用層創建的Surface對象,該對象已經完全打包傳遞給了CameraService,來進行繪圖和渲染的處理。如下所示:BpCamera

// pass the buffered Surface to the camera service

status_t setPreviewDisplay(const sp& surface)

{

ALOGV("setPreviewDisplay");

Parcel data, reply;

data.writeInterfaceToken(ICamera::getInterfaceDescriptor());

Surface::writeToParcel(surface, &data);//數據打包

remote()->transact(SET_PREVIEW_DISPLAY, data, &reply);

return reply.readInt32();

}

BnCamera處,內部實現了新建一個CameraService處的Surface,但是都是用客戶端處的參數來初始化的。即兩者再不同進程中,但所包含的信息完全一樣。

case SET_PREVIEW_DISPLAY: {

ALOGV("SET_PREVIEW_DISPLAY");

CHECK_INTERFACE(ICamera, data, reply);

sp surface = Surface::readFromParcel(data);

reply->writeInt32(setPreviewDisplay(surface));//設置sueface

return NO_ERROR;

} break; Surface::Surface(const Parcel& parcel, const sp& ref) : SurfaceTextureClient() { mSurface = interface_cast (ref); sp st_binder(parcel.readStrongBinder()); sp st; if (st_binder != NULL) { st = interface_cast (st_binder); } else if (mSurface != NULL) { st = mSurface->getSurfaceTexture(); } mIdentity = parcel.readInt32(); init(st); }

這裡的Surface建立是通過mSurface來完成和SurfaceFlinger的通信的,因為之前Camera客戶端處的Surface是和SurfaceFLinger進行Binder通信,現在要將原先的Bpxxx相關的寫入到CameraService進一步和SurfaceFlinger做後續的Binder通信處理,如queueBuffer()處理中和SurfaceFlinger的Bufferqueue的通信等。

4.故anw->dequeueBuffer的函數就和之前的從Android Bootanimation理解SurfaceFlinger的客戶端建立完全對應起來,而且完全一樣,只是Bootanimation進程創建的Surface交給OpenGL Es來進行底層的比如dequeue(緩存申請,填充當前的buffer)和enqueue(入列渲染)的繪圖操作而已,見Android4.2.2 SurfaceFlinger之圖形緩存區申請與分配dequeueBuffer一文。具體的繪圖就不在這裡說明了。通過該方法已經和SurfaceFlinger建立起連接,最終交由其進行顯示。

首頁-底部Tab導航(菜單欄)的實現:FragmentTabHost+ViewPager+Fragment

首頁-底部Tab導航(菜單欄)的實現:FragmentTabHost+ViewPager+Fragment

前言Android開發中使用底部菜單欄的頻次非常高,主要的實現手段有以下:- TabWidget- 隱藏TabWidget,使用RadioGroup和RadioButto

Android二維碼ZXING3.0(201403發布)接入

Android二維碼ZXING3.0(201403發布)接入

ZXING開源項目官方網站https://github.com/zxing/zxing/tree/zxing-3.0.0。 架包下載地址http://repo1.mave

Android控件開發之Gallery3D酷炫效果(帶源碼)

Android控件開發之Gallery3D酷炫效果(帶源碼)

前言:因為要做一個設置開機畫面的功能,主要是讓用戶可以設置自己的開機畫面,應用層需要做讓用戶選擇開機畫面圖片的功能。所以需要做一個簡單的圖片浏覽選擇程序。最後選用Gall

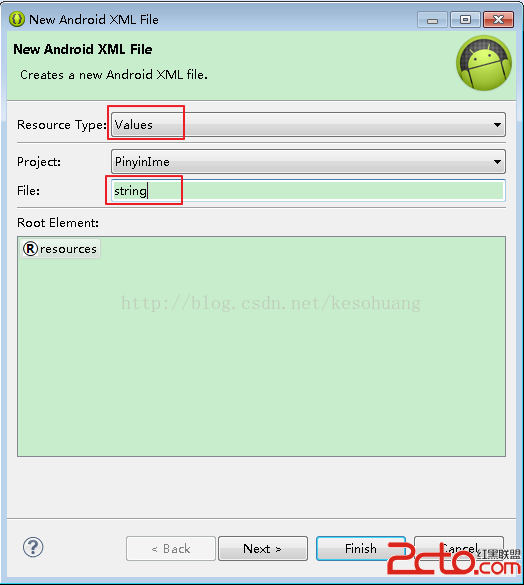

android實現多語言自動切換字體

android實現多語言自動切換字體

我們建好一個android 的項目後,默認的res下面 有layout、values、drawable等目錄 這些都是程序默認的資源文件目錄,如果要實現多語言版本的話